[ad_1]

I think very many people might be surprised by how entries for an art exhibition get assessed by most art societies.

I’ve written on this topic before in The agony and the ecstasy of art competition judging – which comments on the time involved in reviewing and judging 15,000 entries for the RA Summer Exhibition

This post comments on how the pandemic has influenced how artwork now gets judged.

How entries for open art exhibitions get assessed

In person versus digital

Traditionally, the practice has been to convene a selection panel which sits in a room as handlers bring artwork past. The speed with which the artwork moves depends on how many entries have been received and how much time has been allocated to the selection process. Bottom line it’s not long.

Then we began to have digital entry – and selection of entries started to have a digital element to the process. Often with the “first cut” being done via digital assessment of the images submitted by artists, and then final assessment being made by a panel from the “longlist”.

During the pandemic, efforts were made to keep annual exhibitions going and many switched to selection being wholly digital and online – and virtual exhibitions.

Interesting aspects of digital selection is that

- Selectors will assess much more on the basis of what they as individuals think

- “groupthink” is instantly eliminated (assuming no discussion between selectors).

- Selectors will spend the amount of time they think they need to spend on selection. It’s unlikely to be identical and there may be extremes.

A big benefit of digital selection is that

- it cuts expenses for art societies dramatically – given there is no need to reimburse people for travel to get to places where artwork is being judged. These expenses can be significant for national art societies and can be a welcome saving.

- once experienced, you need to come up with a very good reason to go back to being in front of the art to sift out the “no hopers” and “not quite good enough”

- people who don’t read instructions and submit images which are too small. They’re an automatic “fail” in my book as I can’t assess an image which is too small.

- people who digitally manipulate their images to make them look better than they are in reality. This typically affects colour and/or tone. It is possible to detect images where this has happened. Or at least I know how…. 😉

- It’s really difficult to get a sense of the size of the work. I have to make an effort to check dimensions when assessing. Anybody who fails to provide dimensions end up leaning towards a a fail for me.

Sift and sort or mark and grade?

I’m personally in favour of the very fast sort of process which eliminates the “no hopers” and accelerates the “dead certs” so that the remaining time spent judging is spent more on those which have a good chance of getting hung – but need a second look.

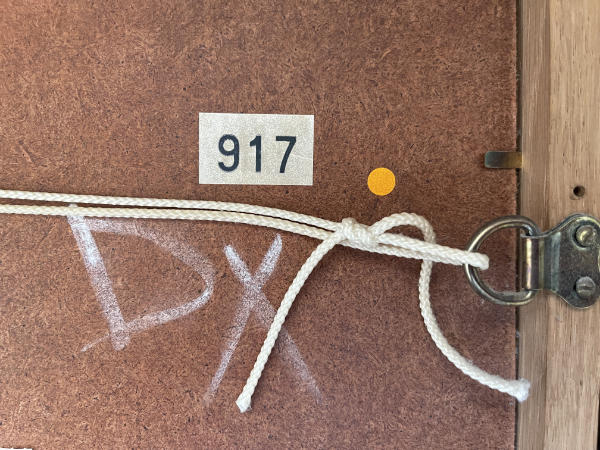

It’s why the frequent method adopted by many art societies is the Yes / No / Doubtful approach (or ✔ / ✖ / D chalked on the back) with only the Doubtfuls being reviewed again.

|

| The reverse of one of my artworks which was a D = doubtful which then got rejected = X many years ago and has hung in my home ever since! copyright Making A Mark Publications |

If you’re asking people to do selection – and not paying them – then you need to think VERY CAREFULLY about how your process impacts on their time.

If you’re paying them, then you pay the going rate!

If you’re not paying them, then avoid wasting their time.

Marking / Grading every single artwork

This year I agreed to select artwork for an annual exhibition. However I said “Yes” thinking it would be like others I have judged – which has essentially typically involved:

- identifying a specific number of artworks (within categories) and then

- identifying ones which should be considered for prizes.

I was rather surprised to find out for this particular exhibition, that I’m expected to mark every single artwork out of 100. My marks are added to those of other judges to arrive at those selected for hanging so I obviously needed to come up with numbers!

I’ve not come across this approach before and needed to work out how I could be systematic and fair to all artworks and remain consistent across all entries.

(see more about how I did this tomorrow)

Which is better method of assessment – digital or in person?

I’ve assessed and selected entries both online and through being face to face with the artwork.

To be honest I prefer:

- selecting on my own

- from very good digital images – with a minimum and maximum size in terms of dimensions, resolution and file size

- sifting and sorting by looking through fast and identifying definite Yes and No responses.

- Spending time on the remainder to make sound decisions.

However I do feel that hanging should still depend on a final assessment of whether the artwork in person is as good as it looked on screen.

There are those who are not above submitting “enhanced” versions of their artwork to try and get hung in an exhibition.

_____________________

Tomorrow I’ll be commenting on my method and criteria for assessing and marking artwork

[ad_2]

Source link

:strip_icc()/BHG_PTSN19720-33d9cd22f6ab49e6a21982e451321898.jpg)

More Stories

Gurney Journey: USA Today Recommends Dinotopia

“From Generation to Generation…” — A Sanctified Art

The Public Theater’s Under The Radar Festival Lights Up NYC This January